GeoLocation Positional Accuracy and Its Challenges

GeoLocation Positional Accuracy and Its Challenges

By Gary Angel

|February 5, 2019

To understand why ML became the centerpiece of our location analytics strategy you have to understand why we had a problem in the first place. In this post, I’m going to describe how traditional location analytics systems position shoppers in the store, explain why it doesn’t work well, and why ML is part of a potential solution.

Let’s start with the way electronic systems work to track shopper movement. Stores are equipped with a set of electronic detectors that listen for signals off phones or other devices. These are typically either Wifi listeners or Blue-Tooth listeners or both. In some cases, they are Wifi Access Points whose primary purpose is providing internet access. In other cases, they are measurement dedicated listeners that do nothing but vacuum up signals.

These detectors are typically placed in a grid around the store:

With this array of detection devices, not only will we capture any electronic signals, but we can count on capturing a signal on multiple devices. That’s critical.

When a device emits a signal, multiple detectors will “hear” the signal.

But because (as we all know) signals lessen with distance, only detectors near enough to the emitter will detect the signal. What’s more, each detector keeps track of how strong the signal it heard actually was.

The process of determining the position of an emitter given the know position of the detectors and the signal strengths at each detection point is called trilateration. It’s a geometric exercise based on the known properties of the signal and how it diminishes over distance.

Let’s say a detector picks up a signal with a strength of -57 (signal strengths are reported in negative numbers and a -57 is, for example, stronger than a -91. This is both intuitive (it’s a bigger number) and counter-intuitive (most folks aren’t used to dealing in negative numbers). Using a pre-defined power curve, you can translate a signal strength into a known distance. Let’s say 18 feet is the distance we’d expect a phone to be from the detector with a signal strength of -57.

Given that, we don’t know the location of the phone. But we do know that it’s likely somewhere on the circumference of a circle with a radius of 18 feet:

That’s obviously not good enough for placing the device. Which is why having multiple detectors within range is so critical. Because if we repeat this process for three detectors (a process called trilateration), we can hone in on exactly where the device is:

It’s kind of like building a Venn Diagram, right?

Trilateration is a geometric process. That’s nice because it means that it can be done very quickly and it doesn’t require you have any information other than the position of the detectors and the received signal strength at each detector.

It’s all great, except when it isn’t. And when it isn’t turns out be nearly always. There’s nothing wrong with the basic theory of trilateration. It ought to work pretty well. The problem lies in the unique challenges of indoor spaces. The core of trilateration is the power-to-distance curve. It’s not a perfect curve to begin with (the first six to eight feet don’t slope normally), but indoors it’s almost always massively incorrect.

The problem is that indoor surfaces absorb and reflect the signals, creating an environment where almost every signal is distorted at the detector:

When that happens, trilateration gets broken fast. You can have situations where the radius of detection doesn’t overlap at all, where multiple devices all think the signal is closest to them, or where two separate groups of detectors trilaterate to completely different locations. You can, routinely, simply have an overlap area in the wrong place. If, for example, a wall is absorbing some of the signal between one side of the store and a detector, then EVERY SINGLE SIGNAL will be positioned too far away from that detector.

The intricacies of the actual store make trilateration deeply and consistently inaccurate.

So what to do?

The basic solution to the problem is fairly obvious and well understood. It’s called fingerprinting. To fingerprint an area, you position yourself in the store, emit a signal, and then see how strong the signal is at each detection point. That set of signal strengths becomes the fingerprint for that place in the store. And if you get a pattern of signal strengths that match, you can assume that the device is in that place.

Fingerprinting can supplement or entirely replace trilateration as a positioning technique depending on how aggressively it’s used. In our process, we go the replacement route and rely entirely on fingerprinting. And that’s where the ML comes in.

Because it turns out that from signal to signal there is SIGNIFICANT variation between detected strengths even of identical probes. So having a single mathematical fingerprint pattern doesn’t work any better than trilateration. But by capturing thousands of sample probes, we can use ML to match any set of detected signals to the most likely part of the store.

So there you have it. Our challenge with ML is to create a more accurate positioning system that locates the shopper correctly in real-world spaces. Hopefully, you can also see why no geometric methods is ever going to work very well and why machine learning might be the right solution.

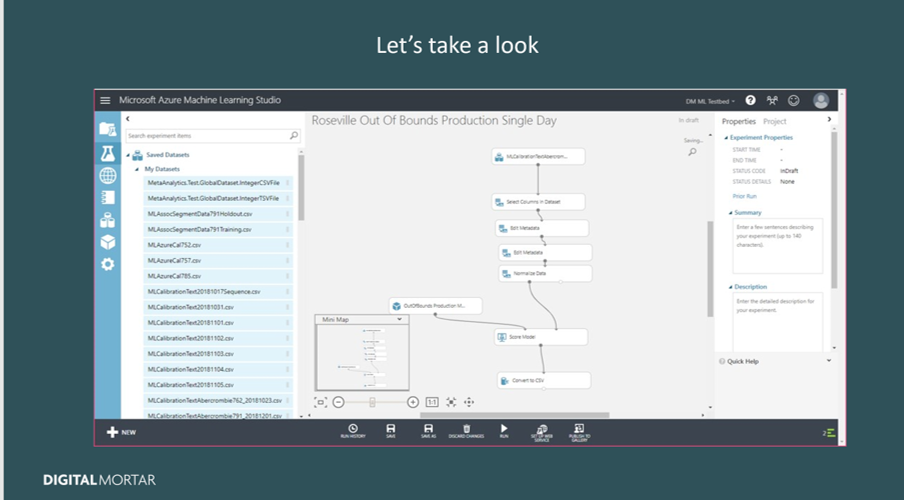

Next stop – Creating a Supervised Learning Data Set…