Store Testing, Continuous Improvement and DM1

Store Testing, Continuous Improvement and DM1

By Gary Angel

|June 26, 2017

Continuous improvement is what drives the digital world. Whether applied as a specific methodology or simply present as a fundamental part of the background against which we do business, the discipline of change and measure is a fundamental part of the digital environment. A key part of our mission at Digital Mortar is simply this: to take that discipline of continuous improvement via change and measurement and bring it to stores.

Every part of DM1 – from store visualizations to segmentation to funnel analytics – is there to help measure and illuminate the in-store customer journey. You can’t build an effective strategy or process for continuous improvement without having that basic measurement environment. It provides the context that let’s decision-makers talk intelligently about what’s working, what isn’t and what change might accomplish.

But as I pointed out in my last post, some analytic techniques are particularly useful for the role they play in shaping strategy and action. Funnel Analysis, I argued, is particularly good at focusing optimization efforts and making them easily measurable. Funnels help shape decisions about what to change. Equally important, they provide clear guidance about what to measure to judge the success of that change. After all, if you made a change to improve the funnel, you’re going to measure the impact of the change using that same funnel.

That’s a good thing.

One of the biggest mistakes in enterprise measurement (and – surprisingly – even in broader scientific contexts) is failing to commit to your measurement of success when you start an experiment. It turns out that you can nearly always find some measure that improved after an experiment. It just may not be the right measure. If folks are looking for a way to prove success, they’ll surely find it.

Since we expect our clients to use DM1 to drive store testing, we’ve tried to make it easy on both ends of the process. Tools like funnel analysis help analysts find and target areas for improvement. At the other end of the process, analysts need to be able to easily see whether changes actually generated improvement.

This isn’t just for experimentation. As an analyst, I find that one of the most common tasks I have do is compare numbers. By store. By page. By time-period. By customer segment. Comparison provides basic measurement of change and context on that change.

Which makes comparison the core capability necessary for analyzing store tests but also applicable to many analytics exercises.

Though comparison is a fundamental part of the analytic process, it’s surprising how often it’s poorly supported in bespoke analytics tools. It took many years for tools like Adobe’s Workspace to evolve – providing comprehensive comparison capabilities. Until quite recently in digital analytics, you had to export reports to Excel if you wanted to lay key digital analytic data points from different reports side-by-side.

DM1’s Comparison tool is simple. It’s not a completely flexible canvas for analysis. It just takes any analytic view DM1 provides and allows you to use it in a side-by-side comparison. Simple. But it turns out to be quite powerful in practice.

Suppose you’re running a test in Store A with Store B as a control. DM1’s comparison view lets you lay those two Stores side-by-side during the testing period and see exactly what’s different. In this view, I’ve compared two similar stores by area looking at which areas drove the most shopper conversions:

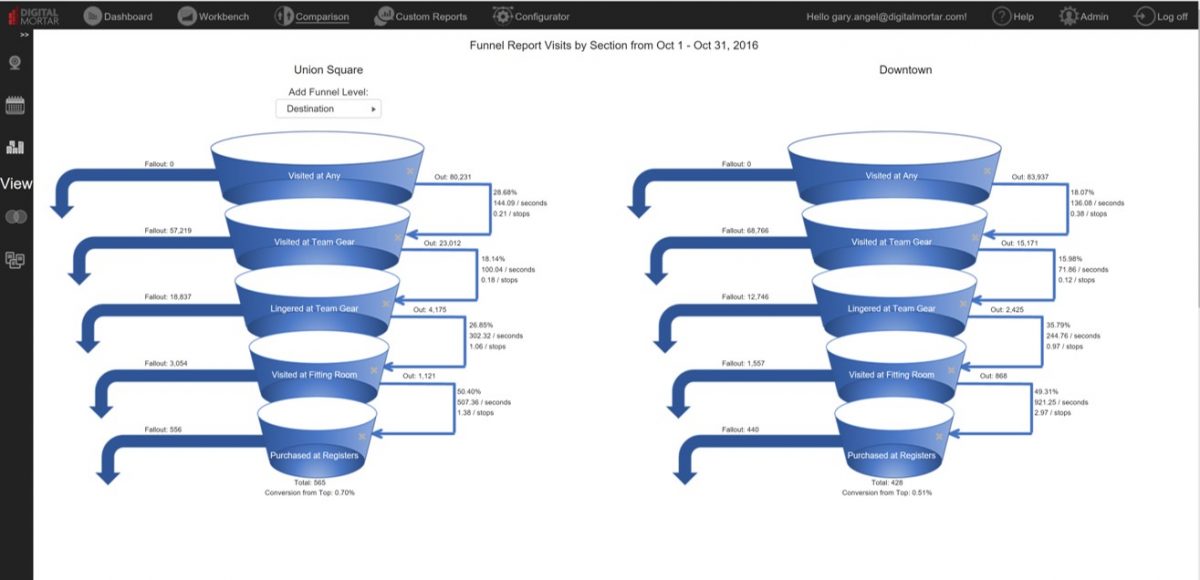

You can use ANY DM1 visualization in the Comparison. The funnel, the Store Viz or traditional reports and charts. In this view, I’ve compared the Shopper Funnel around a single merchandising category at two different stores. Not only can I see which store is more effective, I can see exactly where in the funnel the performance differences occur:

Don’t have a control store? If you’re only measuring the customer journeys in a single store or if your store is a concept store, you won’t have another store to use as a control. No problem, DM1’s comparison view lets you compare the same store across two different time periods. You can compare season over season or consecutive time periods. You don’t even have to evenly match time periods. Here I’ve compared the October Funnel to Pre-Holiday November:

Store and Date/Time are the most common type of comparison. But DM1’s comparison tool lets you compare on Segments and Metrics as well. I often want to understand how a single segment is different than other groups of visitors. By setting up a segmentation visualization, I can quickly page through a set of comparison segments while holding my target group constant. In the first screen, I’ve compared shoppers interested in Backpacks with shoppers focused on Team Gear in terms of how effective interactions with Associates are. With one click, I can do the same comparison between Women’s Jacket shoppers and Team Gear:

The ability to do this kind of comparison in the context of the visualizations is unusual AND powerful. The Comparison tool isn’t the only part of DM1 that supports comparison and contextualization. The Dashboard capability is surprisingly flexible and allows the analyst to put all sorts of different views side by side. And, of course, standard reporting tools like Charts and Table provide significant ways to do comparisons. But particularly when you want to use bespoke visualizations like Funnels and DM1’s store visualizations, having the ability to lay them side by side and quickly adjust metrics and view parameters is extraordinarily useful.

If you want to create a process of continuous improvement in the store, having measurement is THE essential component. Measurement that can help you identify and drive potential store testing opportunities. And measurement that can make understanding the real-world impact of change in all its complexity.

DM1 does both.